BALI — The voice sounds calm. The face looks real. The connection feels personal.

It begins, as many online relationships do, with a conversation.

Then comes the shift—subtle at first. A suggestion. An opportunity. A reason to trust. And eventually, a request for money.

What follows is not new. But how it happens is.

Across Southeast Asia, a new generation of love scams is emerging—one powered not just by deception, but by artificial intelligence sophisticated enough to simulate human presence in real time.

The result is a form of fraud that is harder to detect, more scalable, and increasingly organized.

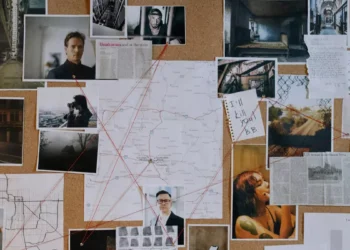

Inside the AI-Driven Scam Economy

Investigators say these operations are no longer small or improvised. They are structured, coordinated, and industrial in scale.

At the center of this system are so-called “AI models”—young recruits, often women, hired to appear on live video calls while software alters their faces in real time. The person on screen is real. The identity is not.

Hieu Minh Ngo, a cybercrime investigator with the Vietnamese nonprofit ChongLuaDao, has been tracking the trend for more than a year.

“In the past year, they have also been recruiting people to become AI models,” he told Wired. “They provide software to swap faces using AI and carry out love scams.”

The setup is deceptively simple. Recruits are offered high salaries—sometimes thousands of dollars a month—to conduct video calls. They are placed in controlled environments, often referred to as “AI rooms,” where they spend hours speaking with targets across different time zones.

Using face-swapping technology, their appearance is modified in real time to match a fabricated persona designed to attract and retain attention.

What they are building is not just a conversation—but trust.

A System Built to Scale

The operations extend far beyond individual interactions.

Investigators have identified networks of recruitment channels—often on platforms like Telegram—where job seekers are funneled into organized fraud hubs. From there, the process becomes routine: scripts, targets, shifts, and performance metrics.

The model is scalable because it blends human interaction with automated enhancement. Unlike traditional scams that rely on text or static images, these operations simulate presence—voice, movement, expression—all in real time.

The effect is powerful.

For victims, the experience feels authentic. For operators, it is efficient.

The Hidden Cost of Participation

What is presented as opportunity can quickly become entrapment.

According to investigators, many recruits find themselves unable to leave once inside the system. Passports may be confiscated. Working hours can stretch across entire days. In some cases, individuals face intimidation or violence if they refuse to continue.

What begins as employment becomes coercion.

And what appears on screen as connection is, behind the scenes, part of a tightly controlled operation.

Why This Matters Beyond the Screen

For Bali—and for other globally connected destinations—the implications are not abstract.

The island’s international population, combined with its large community of expatriates and digital nomads, makes it a natural target for cross-border scams. Not because of geography, but because of access: English-speaking users, frequent online interaction, and relative financial mobility.

Love scams have long existed. What has changed is their credibility.

A video call, once seen as a safeguard against deception, can now be manipulated. The face may be real. The interaction may feel spontaneous. But the identity behind it may be entirely constructed.

Bali is not the source of these operations. But it is firmly within their reach.

What Makes This Different

Artificial intelligence has closed a critical gap in online fraud.

For years, scammers struggled to convincingly replicate real-time human interaction. Now, with face-swapping technology and coordinated operations, that barrier is eroding.

The combination of live performance and AI enhancement allows fraudsters to do something that was previously difficult at scale: appear convincingly human, in real time, to multiple targets across the world.

For investigators, the challenge is evolving just as quickly as the technology.

For users, the challenge is more personal: knowing when to trust what they see.

A Trust Problem in Real Time

The rise of AI-powered scams marks a shift not just in technique, but in the nature of online trust.

Verification, once as simple as seeing a face or hearing a voice, now requires additional layers—cross-checking identities, questioning inconsistencies, and resisting emotional pressure tied to financial requests.

What appears genuine may still be constructed.

The New Reality

Artificial intelligence has expanded the boundaries of what is possible online.

It has also expanded the reach of those who seek to exploit it.

Across Southeast Asia, AI-driven love scams are no longer isolated incidents. They are part of a growing system—organized, adaptable, and increasingly difficult to detect.

The face on the screen may look real. The voice may sound human.

But in a world where identity can be generated in real time, trust is no longer something that can be assumed.

It is something that must be verified.